Tom Affinito

VP Corporate Development @ Skai

Tom Affinito

VP Corporate Development @ Skai

Data-driven decision making is the gold standard in marketing. Whether that’s strategic at the top such as annual budget allocation or tactical at the day-to-day level for optimizing keyword bids or social ad targeting, without some sort of evidence-based choice, a decision is deemed to be opinion-based—and inherently less valid. Learn how marketing experiments are the key to helping organizations validate and calibrate data-driven decision making.

In a recent survey of practitioners on data-driven marketing, when asked about their most important data objectives, the top answer was “basing more decisions on data analysis”. However, when asked about the biggest data challenges they face, the top answer was “being able to make more data-based decisions” with 81% of marketers saying that they “consider implementing data-driven marketing strategies somewhat to extremely complicated.”

Many of us make what we consider to be data-driven decisions every day, but there’s an essential part of the equation that many people don’t get right or even understand how: data-driven decision making should not be taken for granted…it needs to be constantly tested and tuned via ongoing experiments.

It goes to follow: What could be more important to any brand than validating that their decision-making process is top-notch?

After all, would you want to get lost in the woods with a faulty compass?

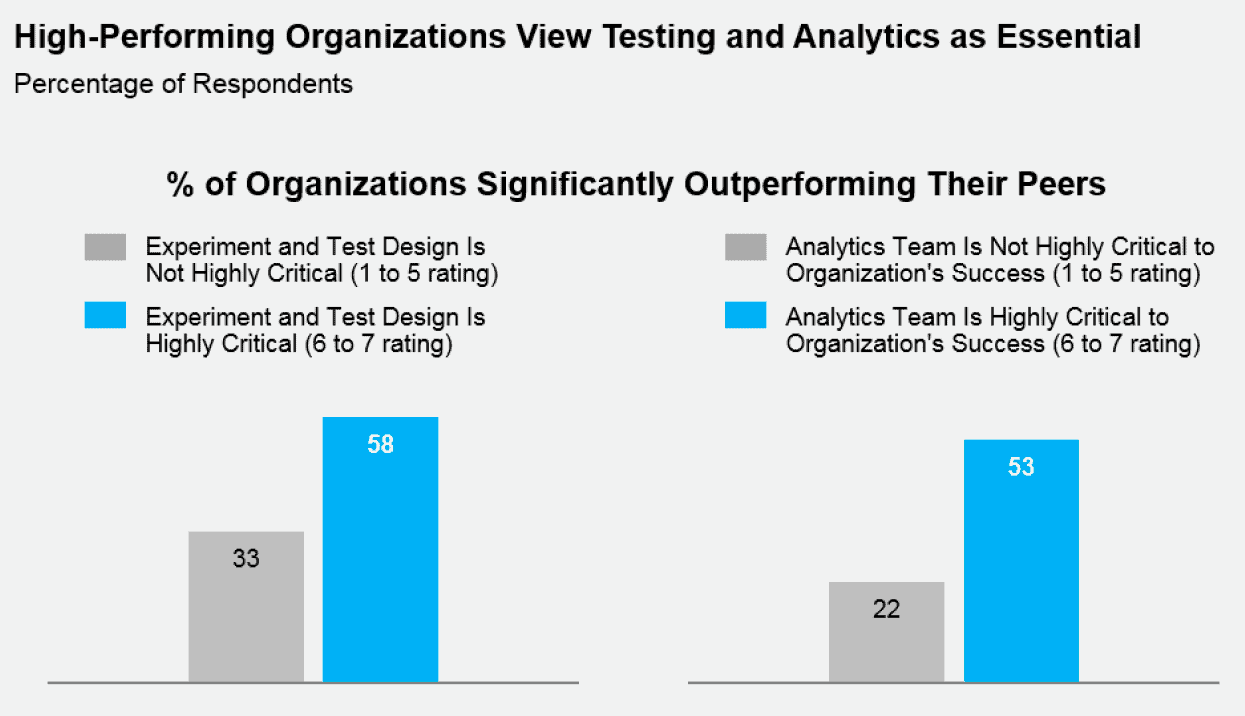

According to research from Gartner:

“Having a test-and-learn culture empowers your organization to make customer experience, marketing and product decisions that cut through opinion, indecision, and uncertainty with data and insight. The results and outcomes mitigate the risk of making the wrong decisions, reduce or eliminate wasted efforts and resources and improve revenue.”

Marketers have been long-expected to absorb a chart or graph on marketing performance and make educated assumptions on how to proceed. For example, if a brand has invested in a new social channel and the ROI of the campaigns are consistently below expectations, the decision might be made to move the budget to another publisher. However, the process for vetting those decisions is often fuzzier. Did the budget perform better with the other publisher? Was it the best decision? Should it have been allocated across a handful of partners instead? Could only half of the budget have been moved?

These questions put the spotlight on the state of today’s data-driven decision making. Often only if the high-level goal goes up or down is a decision deemed good or bad. But, only a marketing organization committed to continual validation via experiments can truly say that their decision-making practice is sound.

And marketing experiments matter. Organizations that significantly outperform their competitors are almost twice as likely to make testing and experimentation a marketing priority.

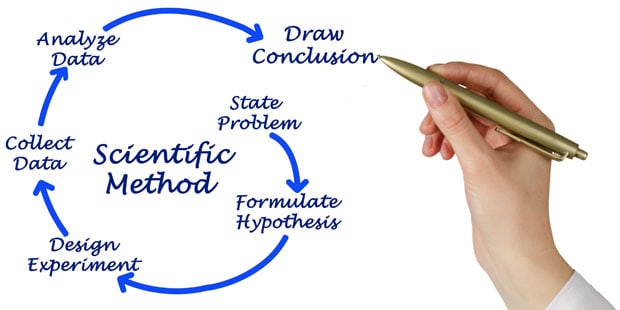

The scientific method is a process that most of us are exposed to in middle school science class, and at its core is a simple point-of-view on testing that marketers must adopt. That is, every assumption must be coupled with a hypothesis, tested in a stable environment, given the proper time to collect significant data, and then be analyzed.

Without this approach, a data-driven decision is simply an educated hypothesis. Probably better than a wild guess, but who knows? A valid testing methodology is needed to know the difference.

Marketing experiments are a critical component to best-in-class, data-driven decision making:

This sounds like a lot of work, but it doesn’t have to be. Yes, processes may need to change and new tools might need to be utilized, but this is what is required in a true data-driven marketing organization.

Marketing experiments aren’t “nice to have”. They are a critical component.

And there are ways to do these things fairly easily and isolated away from the bulk of the marketing so that it doesn’t cause a disruption in business. One option from Skai is Impact Navigator, a SaaS solution built specifically for test-and-learn marketing orgs to measure the incrementality and impact of their marketing programs. The platform can easily handle multiple marketing experiments to minimizes test duration while maintaining statistical significance.

Based on just how much emphasis marketers put on data-driven decision making, it’s not that hard to imagine that one day every functional group in every marketing organization will always be testing. The creative teams will be testing messaging and visuals, the media team will be testing ad formats and spend, the channel specialists will be testing optimization and automation, the CMO will be testing channel and publisher mix…the future of marketing is marketing experiments.

The most beneficial outcome for brands of ongoing testing across the organization is the improvement in their overall data-driven decision making. They will begin to learn which metrics matter, how to shorten tests as much as possible but still get valid results and at what cadence testing needs to take place in order to optimize their programs.

By leveraging marketing experiments, marketers can uplevel data-driven decision making. It doesn’t mean that every decision requires a white-lab-coat approach with years of testing—if anything, it needs to be a non-disruptive and easy approach. But with a slight change in process and a commitment to this standard, the opportunity for brands to improve their marketing ROI and performance is high.

You are currently viewing a placeholder content from Instagram. To access the actual content, click the button below. Please note that doing so will share data with third-party providers.

More InformationYou are currently viewing a placeholder content from Wistia. To access the actual content, click the button below. Please note that doing so will share data with third-party providers.

More InformationYou are currently viewing a placeholder content from X. To access the actual content, click the button below. Please note that doing so will share data with third-party providers.

More Information