Tom Affinito

VP Corporate Development @ Skai

Tom Affinito

VP Corporate Development @ Skai

Marketing experiments can benefits brands in many ways. Not only can they help advertisers to navigate their data-driven marketing, but they can also be used to figure out the right data to use for a given challenge. In this post, Skai’s Tom Affinito explains how validating and calibrating your dataset via marketing experiments is foundational to best-in-class data-driven decision making.

In my previous post, Data-Driven Decision Making: How Good is Your Marketing Org at It? Without Experiments, You Will Never Know, I discussed the conundrum that while data-driven decision making is expected in marketing, most marketers haven’t been rigorously trained in this process. Most decisions made each day—where to invest the marketing budget, how to invest it, which ads are working, which bids are the best, etc—could be flawed.

What percentage of your marketing organization’s decisions would you say is rooted in sound data-driven decision-making?

The only way to truly validate and calibrate your data-driven decision process is through marketing experiments. Ideally, an ongoing test-and-learn culture is the best approach and I even hypothesized that marketing experiments could eventually become part of every marketer’s job.

From my last post:

“Based on just how much emphasis marketers put on data-driven decision making, it’s not that hard to imagine that one day every functional group in every marketing organization will always be testing. The creative teams will be testing messaging and visuals, the media team will be testing ad formats and spend, the channel specialists will be testing optimization and automation, the CMO will be testing channel and publisher mix…the future of marketing is marketing experiments.”

Understanding whether a decision that was made was a good or bad one is just one benefit that marketing experiments can help to accomplish.

But how do you know which data to use to base your decision on? Marketing experiments can be used to help in answering this as well.

“Data” might be the vaguest term in our industry.

Data can be almost anything: facts, stats, charts, graphs, etc. But there are no standards here. Do you use one stat? Two stats? More? For example, if a digital marketer is analyzing an ad to decide whether or not to pause it or let it keep running, just a few of the available metrics might be:

What is the right data needed for this data-driven marketing decision? In the example above, one marketer may compare CTRs of other ads and decide it’s not working well and pause it. Another marketer might look at the conversion rate and keep the ad running. Still another may look at both the CTR and the conversion rate and come up with another course of action.

What about the time range? Should a marketer look at just the previous 30 days? Year-over-year comparisons? Each metric changes based on the time range chosen and could absolutely push a decision in different directions.

Marketing experiments are the answer. A test-and-learn marketing organization can validate and calibrate which datasets drive the best and most accurate decision-making. For example, several rounds of experiments using different combinations of data each time would reveal the best data to drive a decision. Over time, it would become clear which data should be used in each situation.

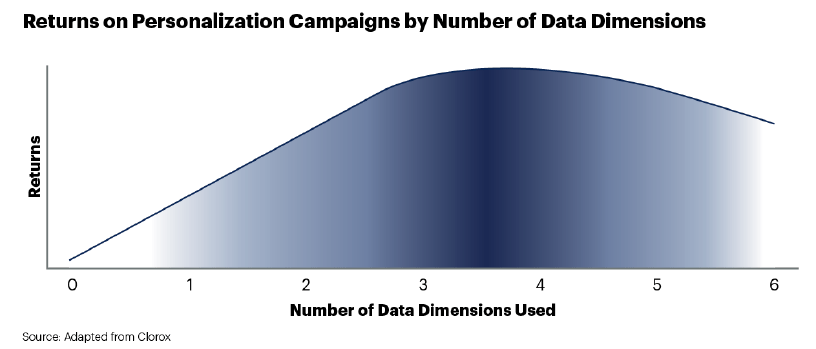

In the Gartner article Data Dimension Prioritization Process in Marketing, the Clorox marketing team discovered through testing that using fewer data points actually yielded better results in their personalization program.

“Clorox conducted market research across a wide range of diverse consumer products and target markets to test the incremental impact of each added data dimension for any given campaign. Clorox’s marketing teams were surprised to find how consistently a small number of data dimensions generated a far better return than using many data dimensions; the optimal number of data dimensions was between three and four.

“Clorox, therefore, limits marketing teams to using three or four data dimensions in their campaigns. Before, teams would have sought to use as many data dimensions as possible, especially with targeted products. Now, rather than thinking of data dimension selection as a brainstorming activity, marketing teams must think of it as a business decision.

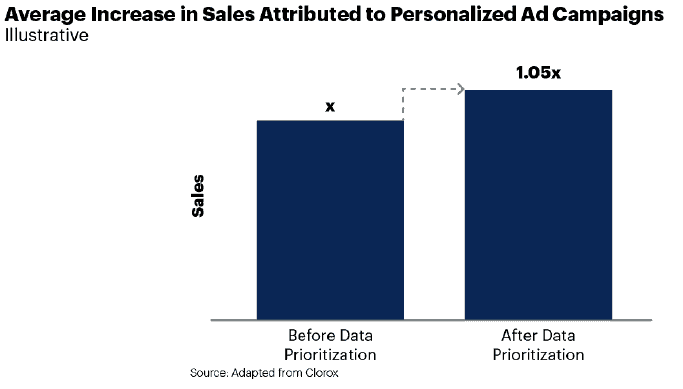

[This process]“ enables teams to seek maximum impact while preventing them from falsely believing ‘more is always better’. By limiting the number of data dimensions used in its campaigns, the marketing organization at Clorox significantly reduced the costs and complexity associated with personalizing content. In one year, Clorox has seen a 5% increase in sales directly attributable to this practice.”

Marketing experiments to discover the data for decision-making

Marketing experiments to discover the data for decision-makingIt’s hard to be confident in your decision-making unless you are confident in which data to use to make the decision. Maybe your decision-making process is absolutely world-class but the recommendations can still be flawed if they are rooted in the wrong datasets.

Marketers have to be experts at both data-driven decision making and evaluating data. With global media spending at $665 billion this year (and growing to $855 billion as soon as 2023), marketing leaders are going to come under more scrutiny for justifying their investment choices, especially in the highly disruptive and dynamic markets we are facing across today’s automotive, financial services, retail, ecommerce, CPG and travel verticals. An optimal use of the right data, and increased marketing insight for validating which data is the right data, is becoming essential.

Whether a CMO is making channel-level budget allocations or a practitioner is optimizing media buys—no matter how smart or experienced they are—no one can consistently and accurately make great data-driven decisions without marketing experiments to validate and calibrate the data-decision process itself.

We use cookies on our website. Some of them are essential, while others help us to improve this website and your experience.

Here you will find an overview of all cookies used. You can give your consent to whole categories or display further information and select certain cookies.